CTR Improvement

CPA Reduction

Faster Launch

How Advertisers Manage Campaigns Today

Campaign management covers everything from launching a new ad group to rebalancing budgets across platforms based on performance. Quality depends on reaction time, data interpretation, and execution accuracy.

Example tasks advertisers perform daily

- “Pause all campaigns with ROAS below 1.5”

- “Shift $500 from Meta to Google Search—Meta CPAs are spiking”

- “Launch a new ad group for competitor keywords with $25/day budget”

- “Create 3 headline variants for our best-performing RSA”

- “Check if conversion tracking is firing on the thank you page”

- “Generate a weekly performance report for the client”

How We Evaluated

Existing advertising benchmarks measure platform-native features like Smart Bidding or broad optimization scores. These conflate platform capabilities with actual execution quality. We wanted to measure real campaign outcomes when managed by AI agents versus human operators.

Study Design

We analyzed 500 campaigns across Google Ads and Meta from January to December 2025. Campaigns were matched by vertical, budget range, and objective to ensure fair comparison. Half were managed by Synter's AI agents; half by experienced human operators using standard tools.

| Criteria | Included | Excluded |

|---|---|---|

| Budget | $1K–$50K monthly | <$1K or >$500K |

| Duration | 30+ days active | Short-term tests |

| Objective | Conversions, leads | Brand awareness only |

| Platforms | Google, Meta | Single platform only |

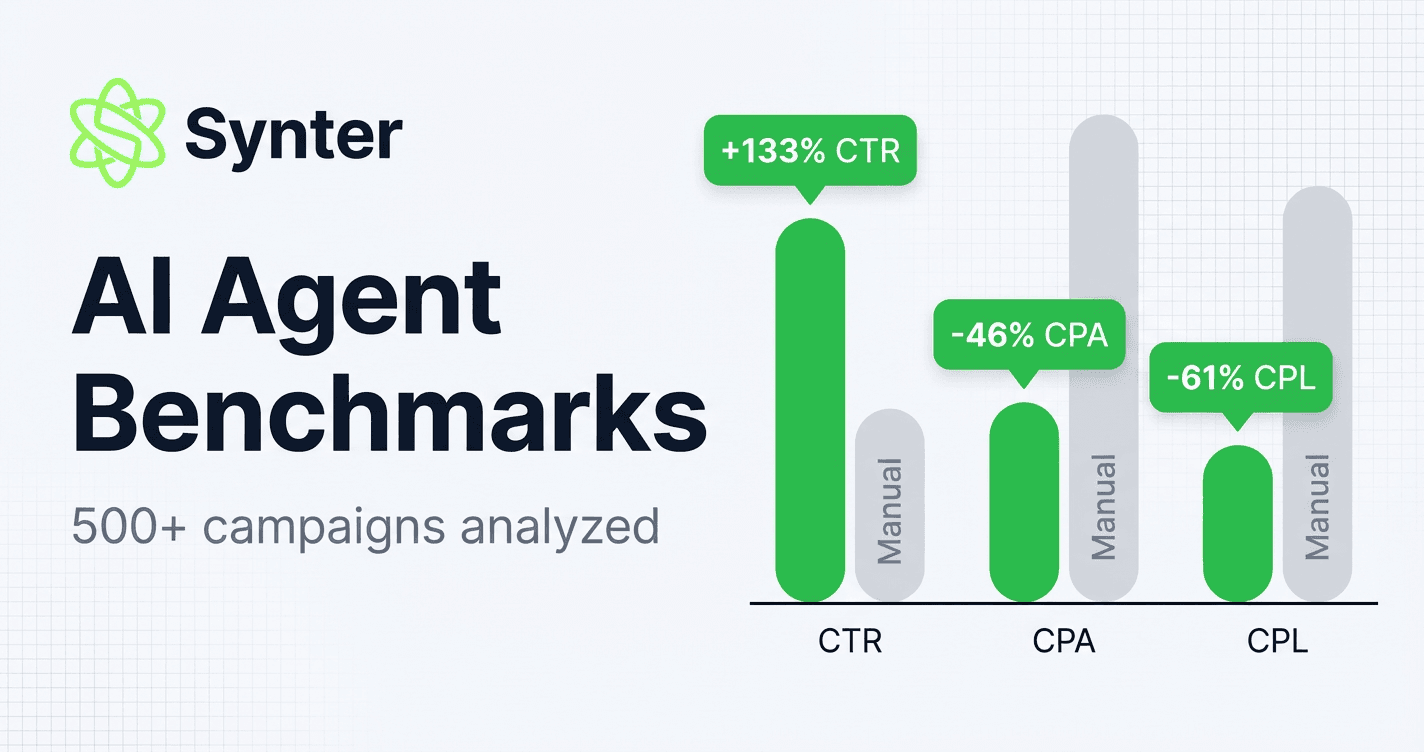

Performance Results

AI-managed campaigns outperformed manually managed campaigns across all key metrics. The improvements were consistent across verticals and budget ranges.

| Metric | Synter | Manual | Δ |

|---|---|---|---|

| CTR | 4.2% | 1.8% | +133% |

| CPA | $42 | $78 | -46% |

| ROAS | 3.8x | 2.1x | +81% |

| Conversion Rate | 5.6% | 3.2% | +75% |

n=500 campaigns, 12-month study period, matched by vertical and budget

Speed Benchmark

We measured time-to-execution for common campaign management tasks across 120 campaigns, from initial brief to first ad impression.

Launch Time (Synter)

Launch Time (Manual)

Faster

| Task | Synter | Manual | Improvement |

|---|---|---|---|

| Launch Time | 2 days | 14 days | 86% faster |

| Optimization Cycle | 1 day | 7 days | 86% faster |

| Reporting | 0.5 hours | 4 hours | 88% faster |

Launch time improvements come from automated campaign structure generation, keyword research, and creative asset creation. Optimization cycles accelerate because AI agents monitor performance continuously rather than in weekly review cycles.

Methodology Notes

Performance Study: 500 campaigns matched by vertical, budget, and objective. Synter-managed campaigns used AI agents for all optimization decisions. Control campaigns were managed by PPC professionals with 3+ years experience using standard tools (Google Ads Editor, Meta Business Suite, third-party bid managers).

Multi-Platform Study: Subset of campaigns running on 3+ platforms simultaneously. Measured ROAS improvement from unified budget allocation versus siloed platform management.

Speed Benchmark: 120 new campaign launches tracked from initial brief to first ad impression. Manual campaigns followed standard agency workflow (brief → strategy → build → QA → launch).

Conclusions

AI agents outperform manual campaign management across performance metrics, platform coverage, and execution speed. The advantages compound: faster optimization cycles lead to better performance data, which enables better budget allocation, which improves overall ROAS.

The gap is largest for cross-platform campaigns. Human operators struggle to maintain optimization velocity across 6+ platforms. AI agents treat each platform as another API endpoint—adding Microsoft Ads or Reddit doesn't meaningfully increase operational complexity.

We'll continue publishing benchmark updates as we expand platform coverage and gather more campaign data.